The increasing availability of affect-rich multimedia resources has bolstered interest in understanding sentiment and emotions in and from visual content. Adjective-noun pairs (ANP) are a popular mid-level semantic construct for capturing affect via visually detectable concepts such as “cute dog” or “beautiful landscape”. Current state-of-the-art methods approach ANP prediction by considering each of these compound concepts as individual tokens, ignoring the underlying relationships in ANPs. This work aims at disentangling the contributions of the ‘adjectives’ and ‘nouns’ in the visual prediction of ANPs. Two specialised classifers, one trained for detecting adjectives and another for nouns, are fused to predict 553 different ANPs. The resulting ANP prediction model is more interpretable as it allows us to study contributions of the adjective and noun components.

Affective computing is the study and development of systems and devices that can recognize, interpret, process, and simulate human affects.

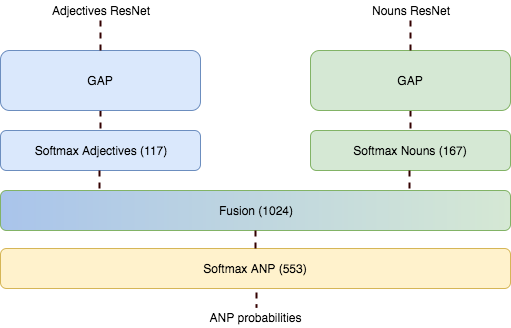

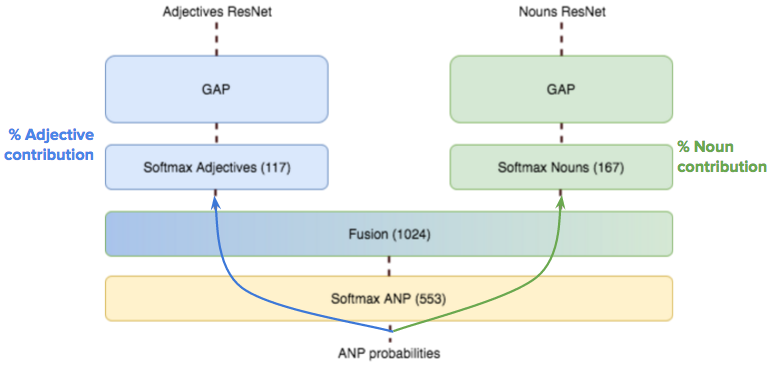

This model that we propose is an interpretable architecture for ANP detection constructed by fusing the outputs of two specialized networks for adjective and noun classiffcation. We will refer to these two specialized networks as AdjNet and NounNet, correspondingly. The architecture for the specialized networks are based on the well-known ResNet-50 model. Residual Networks are convolutional neural network architectures that introduce residual functions with reference to the layer inputs, achieving better results than their non-residual counterparts.

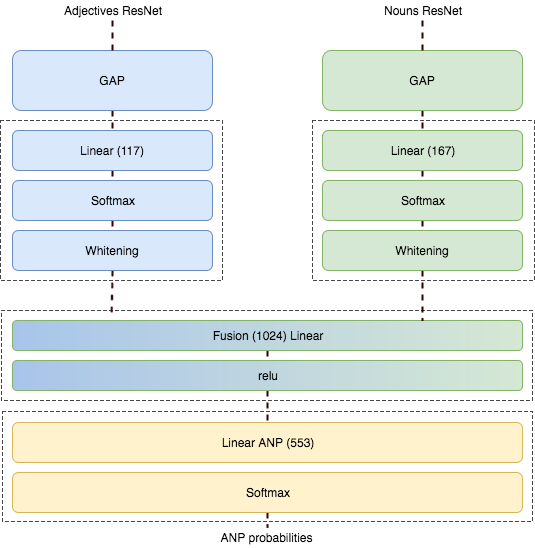

The last layer in ResNet-50, originally predicting the 1,000 classes in ImageNet, is replaced in AdjNet and NounNet to predict, respectively, the adjectives and nouns considered in our dataset. Each of these two networks is trained separately with the same images and the corresponding adjective or noun labels. The probability ouputs of AdjNet and NounNet are fused by means of a fully connected neural network with a ReLU non-linearity. On top of that, a softmax linear classiffer predicts the detection probabilities for each ANP class considered in the model.

As shown in the image above, a whitening layer is added on top of the softmax classifier for both nouns and adjectives. This layer consisted on simply subtracting the mean and dividing by the standard deviation.

In order to be able to calculate the Deep Taylor Decomposition across the fusion layer, there is no Batch Normalization in this layer even though it was used in the noun and adjective classifiers.

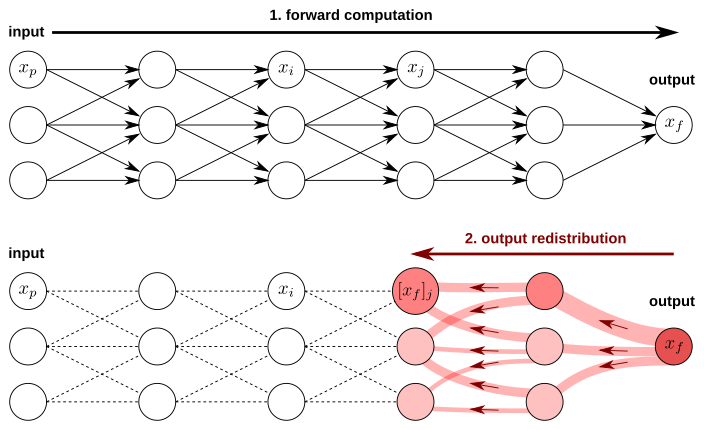

In order to compute how much the noun and the adjective contribute to the prediction of the ANP we use the method Deep Taylor Decomposition (DTD). DTD is a method to explain individual neural network predictions in terms of input variables. It operates by running a backward pass on the network using a predefined set of rules. Deep Taylor decomposition is used to explain state-of-the-art neural networks for computer vision.

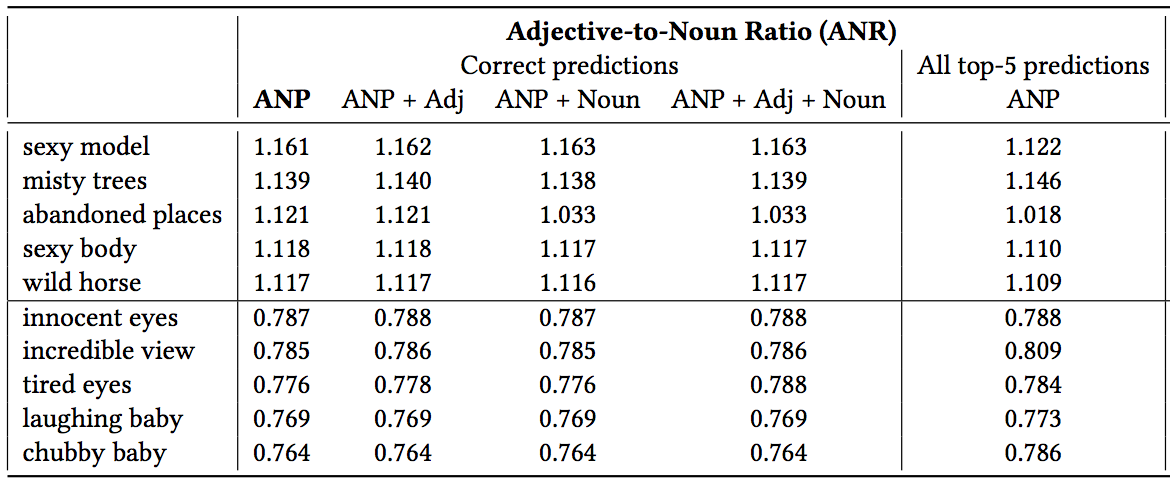

DTD will be applied through the ANP Softmax layer and Fusion layer in order to calculate the contribution of each of the nouns and adjective classes. Once we know how much each noun and adjective have contributed for each ANP we can compute what is the proportion of contribution for nouns and adjectives. We de ne the Adjective-to-Noun Ratio (ANR) as the normalized contribution of the adjectives with respect to the nouns during the prediction of an ANP.

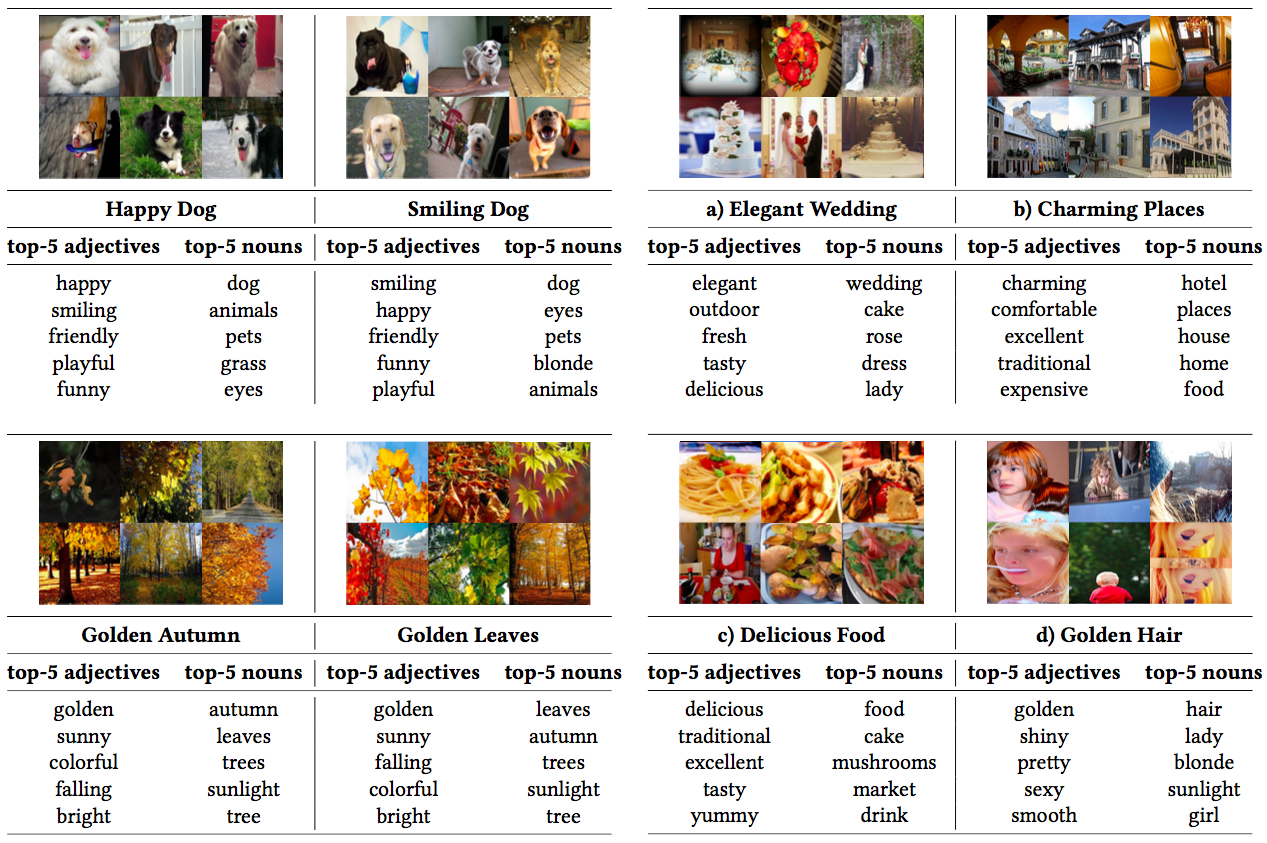

The most contributing adjectives and nouns detected by ANPnet can also be used as semantic labels themselves. This way, our model can detect in a single pass additional concepts related to the predicted ANP, with applications to image tagging, captioning or retrieval. On the two left columns we can see that there are ANPs (golden autumn/golden leaves and happy dog/smiling dog) with equivalent meanings which contributions are the same. On the right columns, in example a) it can be seen how ANPnet learned that the most related concepts for an “elegant wedding” scene are the names“cake”, “rose”, “dress” and “lady”, and the adjectives “outdoor”, “fresh, “tasty” and “delicious”.

In this table we can see the highest and lowest ANRs. An ANR higher than 1 (upper part of the table) means that the contribution of the adjective is higher than the contribution of the noun. In the lower part of the table we see tha ANPs with the lowest ANR.

Have a look at our code in our github repository.

Delia Fernandez, Alejandro Woodward, Victor Campos, Xavier Giro-i-Nieto, Brendan Jou and Shih-Fu Chang, "More Cat than Cute ? Interpretable Prediction of Adjective Noun Pairs". ACM Multimedia 2017 Workshop on Multimodal Understanding of Social, Affective and Subjective Attributes (MUSA2). Mountain View, California, USA. (23rd October 2017)