introduction

We present a recurrent model for semantic instance segmentation that sequentially generates pairs of masks and their associated class probabilities for every object in an image. Our proposed system is trainable end-to-end, does not require post-processing steps on its output and is conceptually simpler than current methods relying on object proposals. We observe that our model learns to follow a consistent pattern to generate object sequences, which correlates with the activations learned in the encoder part of our network. We achieve competitive results on three different instance segmentation benchmarks (Pascal VOC 2012, Cityscapes and CVPPP Plant Leaf Segmentation).

If you find this work useful, please consider citing:

Amaia Salvador, Miriam Bellver, Manel Baradad, Ferran Marques, Jordi Torres, Xavier Giro-i-Nieto,

"Recurrent Neural Networks for Semantic Instance Segmentation" arXiv:1712.00617 (2017).

Model

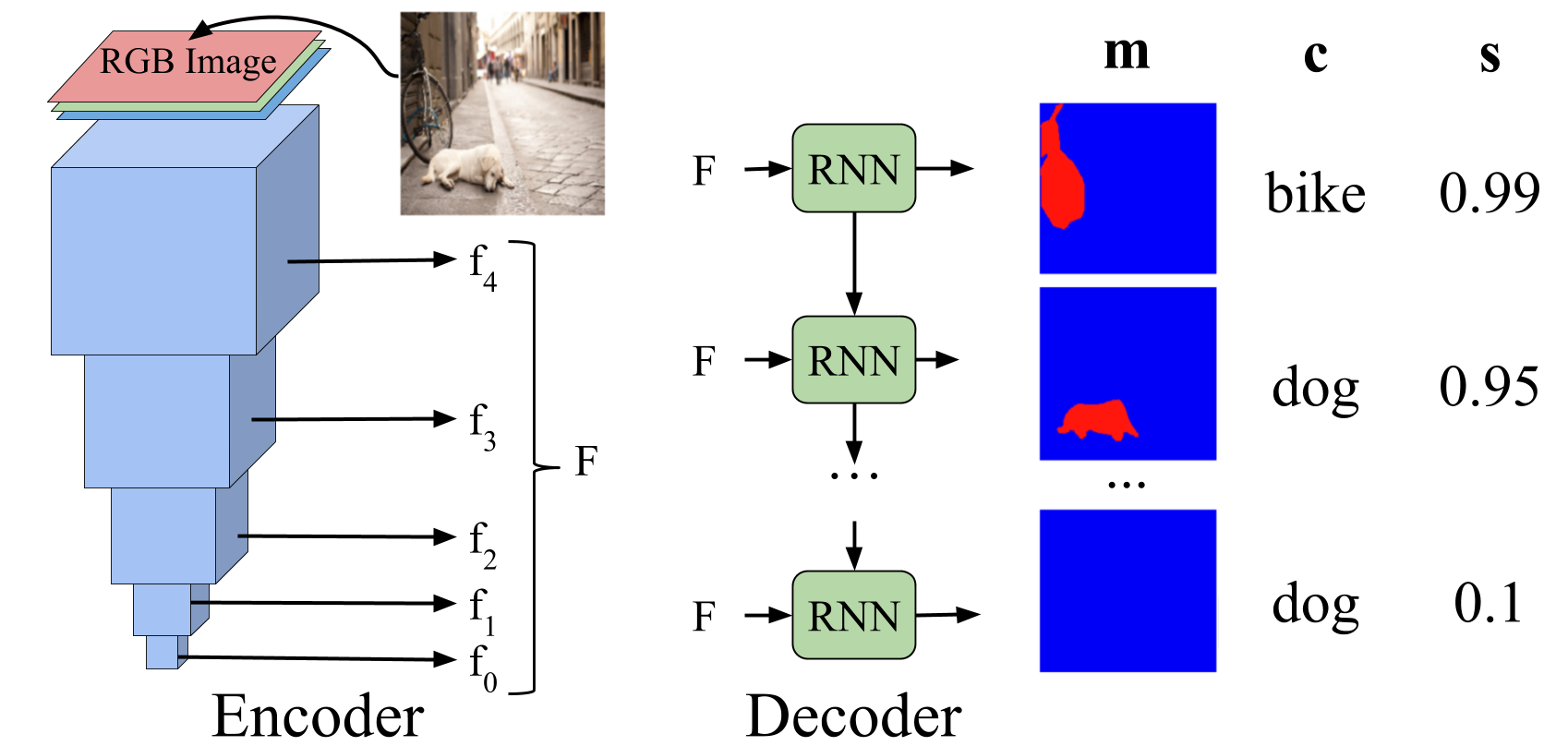

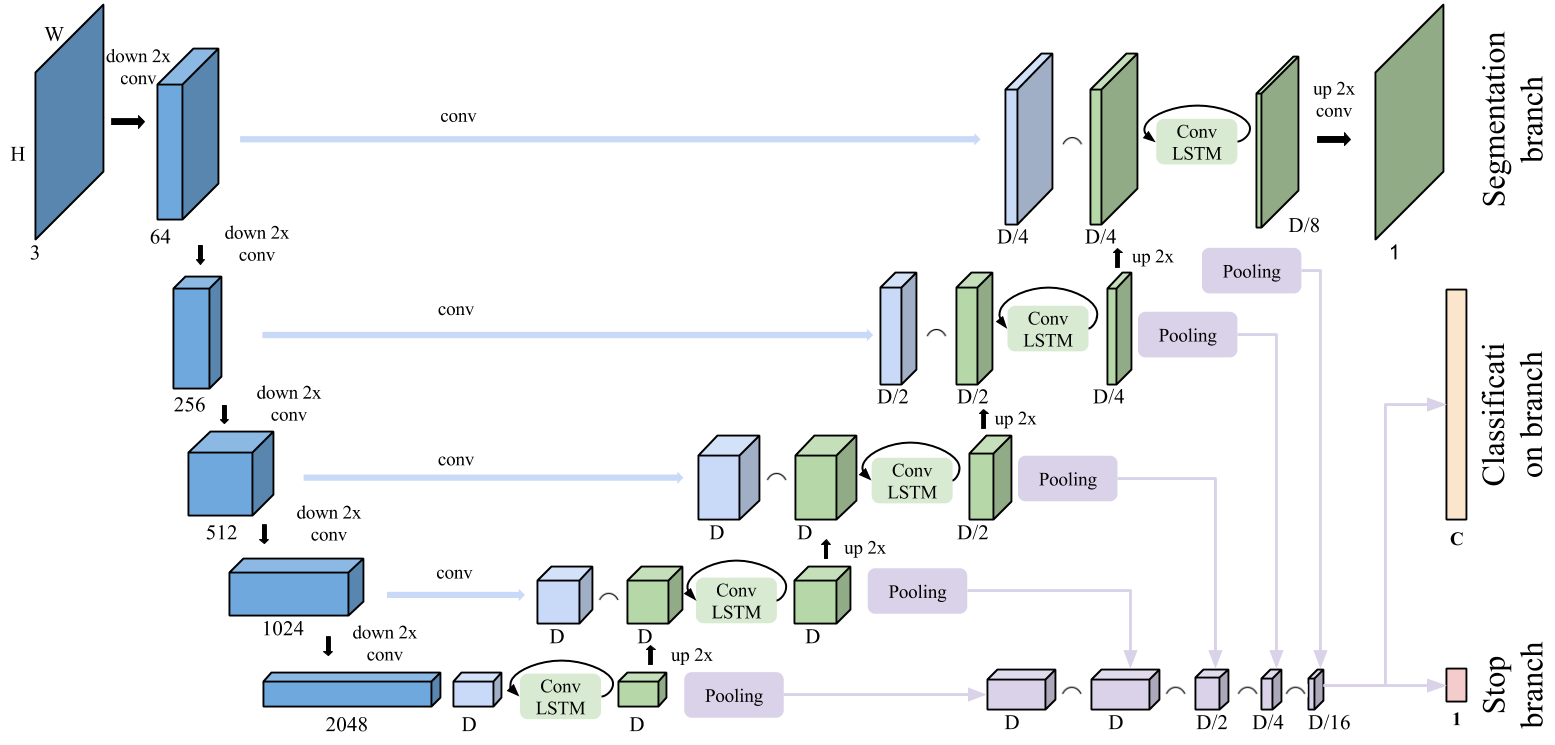

We design an encoder-decoder architecture that sequentially generates pairs of binary masks and categorical labels for each object in the image.

Our model is composed of a series of recurrent modules (Convolutional Long-Short Term Memory - ConvLSTM) that are applied in chain with upsampling layers in between to predict a sequence of binary masks and associated class probabilities. Skip connections are incorporated in our model by concatenating the output of the corresponding convolutional layer in the base model (the one matching the current feature resolution) with the upsampled output of the ConvLSTM. Binary masks are finally obtained with a 1x1 convolution with sigmoid activation.

We concatenate the side outputs of all ConvLSTM layers and apply a per-channel max-pooling operation to obtain a hidden representation that will serve as input to the two fully-connected layers that predict categorical labels and the stopping probabilities.

code

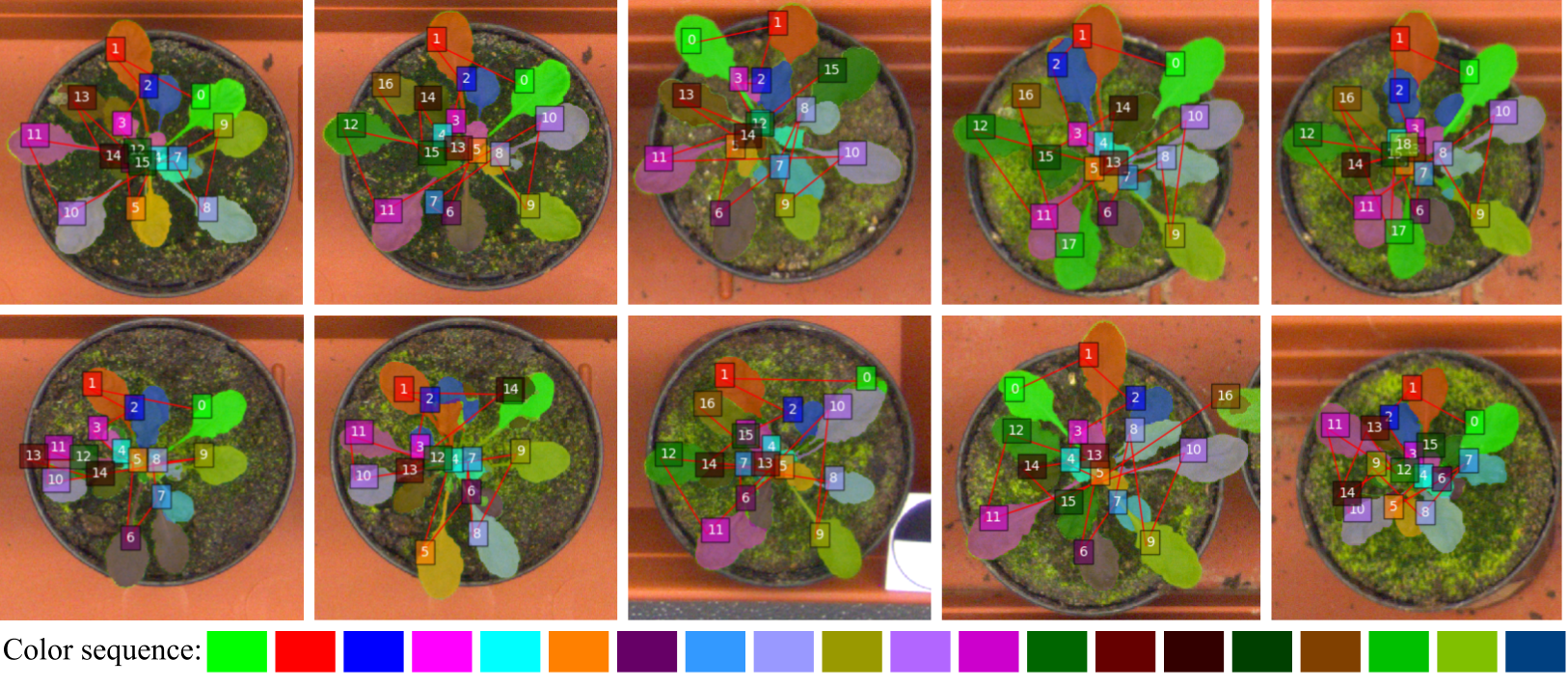

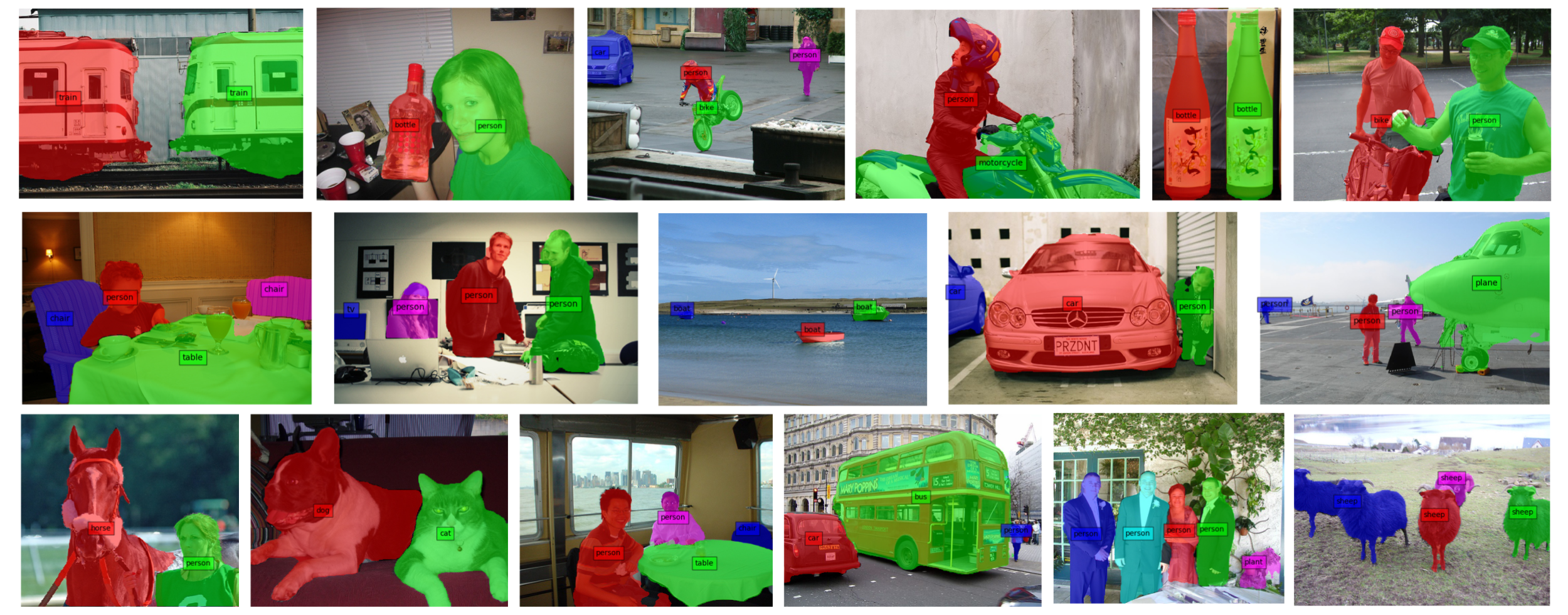

Examples

We provide examples of predicted object sequences for the three datasets.

CVPPP

Pascal VOC

Cityscapes

Mask colors indicate the order in which the mask has been predicted.

acknowledgements

We especially want to thank our technical support team:

| We gratefully acknowledge the support of NVIDIA Corporation with the donation of the GeForce GTX Titan Z and Titan X used in this work. |  |

| The Image Processing Group at the UPC is a SGR14 Consolidated Research Group recognized and sponsored by the Catalan Government (Generalitat de Catalunya) through its AGAUR office. |  |

| This work has been developed in the framework of projects TEC2013-43935-R and TEC2016-75976-R, financed by the Spanish Ministerio de Economía y Competitividad and the European Regional Development Fund (ERDF). |  |